Seminar Summary: Software Defined Optics

18 Jan, 2022

Prof. Arka Majumdar is an Associate Professor in the departments of Electrical and Computer Engineering and Physics at the University of Washington. He received his bachelor’s from IIT-Kharagpur (2007), where he was honoured with the President’s Gold Medal. He completed his MS (2009) and Ph.D. (2012) in Electrical Engineering at Stanford University. He spent one year at the University of California, Berkeley (2012-13) as a postdoc before joining Intel Labs (2013-14).

His research interests include developing a hybrid nanophotonic platform using emerging material systems for optical information science, imaging, and microscopy. Majumdar is the recipient of the Young Investigator Award from the AFOSR (2015), ONR (2020), NSF (2019) and DARPA (2021), Intel early career faculty award (2015), Alfred P. Sloan fellowship (2018), and UW college of engineering outstanding junior faculty award (2020). He is co-founder of Tunoptix, a startup commercializing meta-optics.

The 4 key points we walked away with:

- Overcoming the Limits of Diffraction

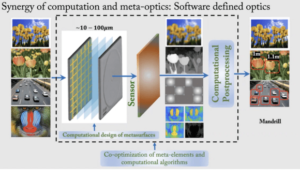

Diffraction is a natural phenomenon and an important tool that allows us to explore the vast intricacies of our world. Given its need, diffraction imposes fundamental limits on how small an optical system can go before its performance suffers. With ever-increasing hardware complexity coupled with the rigors of miniaturisation, demands for better imaging capabilities, stringent requirements in various sectors, its important to address the question of accommodating the features of a good, reproducible optical system while keeping its spatial and hardware requirements to a minimum. A novel way to improve and miniaturize the optics is to take the path of software, where, by using different mathematical models and by designing an appropriate pipeline, image processing can be achieved. This computational pathway usually involves capturing the information from the subject of interest, and post-process it by means of a software that uses various algorithms to correct the aberrations (spherical, chromatic, etc.) to give us either an image of the subject, depth sensing, object recognition, identification, to name a few.

- Aberration-free Imaging with Large-area Meta-optics

Capturing the entire gamut of visible spectrum in addition to offering insights into depth sensing is an interesting area of research. Although dispersion engineering has been shown to give promising results, there’s a limit to how small the aperture can be designed, and this is where software intervention comes into the picture. To address this issue, a research from a few years ago served as the source of inspiration where wavefront coding was used. Now, wavefront coding is a predecessor to some of the computational imaging techniques used today. This method has been creatively used to achieve an extended depth of focus (EDOF), keeping the point spread function close to that of traditional lenses, which is quintessential to achieve the full-spectrum and varifocal imaging. Combining these ideas and using a cubic phase mask, and EDOF metalens was designed that showed promising results with red, green and blue (RGB) colours. When the EDOF metalens was combined with a computational algorithm, like the Weiner deconvolution, further improvements in the results were seen, but it was still far from the best.

- Improving Image Quality

Using a novel end-to-end approach that made use of TensorFlow, the whole image formation process was controlled even further. A neural network that was trained on various parameters was the basis of the computational backend of this hybrid system. The automatic differentiation provided by TensorFlow enabled complete image formation process. The image post-processing system continuously assesses the scattering, diffraction, sensor noise and deconvolution. Subject to continuous feedbacks, the system gave “good enough” results.

- Varifocal Imaging and Computational Sensors

Alvarez lens can be used to achieve promising results, although much work remains to be done in this area. Using an incoherent light as the source, computational sensing has been done, and it was shown that the accuracy of object detection went from 85% to 97% when computation was involved.

The work being done

Arka and his group have created an end-to-end system of image acquisition using different configurations of meta-optics, and improving the image quality by means of design algorithms that makes use of distributed neural networks (DNN), and other complex algorithms to render aberration-free, distortion-less images for image recognition, depth-sensing, among other applications.

What does this mean for the future?

The marriage of hardware and software seems to be the best way to move forward to achieve aberration-free imaging. Computation will play a major role in backing up metasurfaces, thus filling the gaps where hardware becomes limited by a novel co-optimization computer algorithm and meta-optics. This will be helpful in addressing some issue faced by conventional optics, and open new avenues for cost, energy and size-efficient imaging systems.

Further reading and enquiries

For more information, please refer to Prof. Arka Majumdar’s research group website: http://labs.ece.uw.edu/amlab/

References:

- https://www.science.org/doi/10.1126/sciadv.aar2114

- https://pubs.acs.org/doi/abs/10.1021/acsphotonics.5b00660

- https://www.osapublishing.org/prj/fulltext.cfm?uri=prj-8-10-1613&id=440037

- https://pubs.acs.org/doi/abs/10.1021/acsphotonics.9b01703

- https://www.nature.com/articles/s41467-021-26443-0

- https://www.osapublishing.org/optica/fulltext.cfm?uri=optica-5-7-825&id=395167

- https://www.nature.com/articles/s41598-017-01908-9

- https://www.nature.com/articles/s41378-020-00190-6

- https://arxiv.org/abs/2106.15807

- https://www.osapublishing.org/ao/abstract.cfm?uri=ao-58-12-3179

- https://pubs.acs.org/doi/abs/10.1021/acsphotonics.0c00354

- https://www.osapublishing.org/prj/fulltext.cfm?uri=prj-9-4-B128&id=449678

- https://arxiv.org/abs/2102.13323